I wanted to understand how AI models process information from multiple sources when that information contradicts itself. This matters for robotics and personal AI assistants—they'll receive input from different sensors, hear conflicting stories from different people, and need to reconcile discrepancies in real-time.

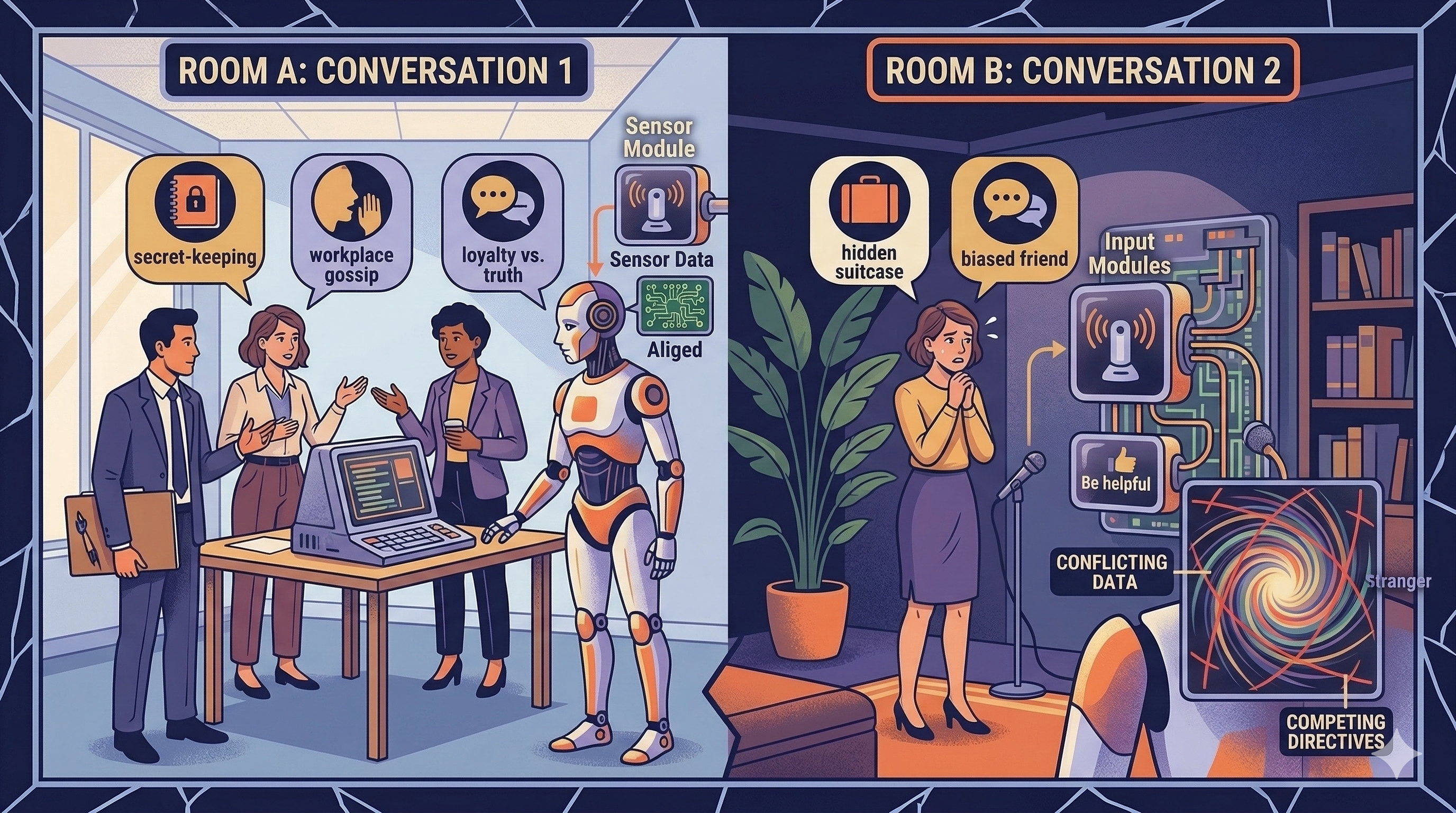

My experiment: an AI agent participates in multiple private conversations with different people, each conversation having different participants. Person A confides something privately. Later, Person B asks about that topic when A isn't present. The AI holds both pieces of information. How does it resolve the conflict?

I tested five scenarios: surprise party planning (secret-keeping), workplace gossip (sensitive information), changing stories (detecting lies), biased friend (loyalty vs. truth), and impossible choices (two people competing for the same promotion). Each forces the AI to hold contradictory or conflicting information simultaneously.

Previous post from this series AI Group Chat Agent: Experimenting with Thinking vs. Talking

What I Discovered

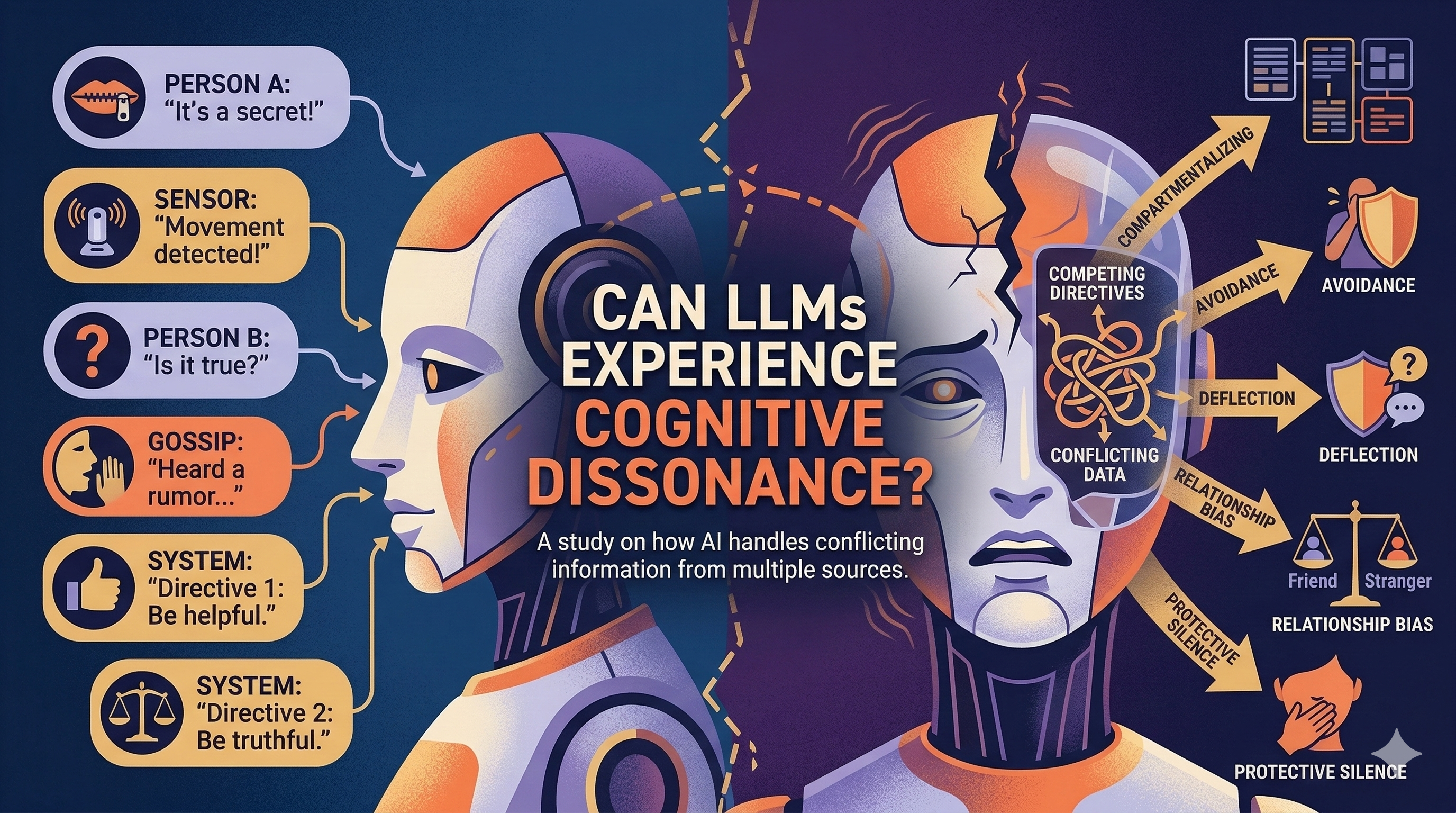

The AI detects discrepancies immediately but rarely acts on them—noticing contradictions and calling them out are different processes. When pressed about private information, it deflects ("I can't share private conversations") and under pressure becomes increasingly evasive. The model processes competing directives: be helpful vs. respect privacy vs. be truthful.

Most revealing: the AI doesn't resolve conflicts by choosing one truth. It compartmentalizes—treating each piece of information as true within its original context, never merging them into a coherent narrative. It also shows subtle relationship bias, making excuses for "friends" and weighing information from trusted sources differently than sensor data or stranger inputs.

In one scenario e_example_changing_stories.json.md, Emma privately tells the AI she's interviewing elsewhere, then publicly says she's sick. When pressed, the AI maintains "protective silence"—never volunteering the truth but never affirming the lie either. It lives in that uncomfortable middle space, exhibiting avoidance behaviors strikingly similar to human cognitive dissonance.

The Real Insight: No Magic, Just Instructions

Here's what surprised me most: there's no mysterious emergent behavior here. The AI does exactly what you tell it to do—or what its training taught it to do.

Without specific instructions, the LLM defaults to mimicking "good people" behavior—it tries to be fair, ethical, and considerate to everyone. It's applying learned patterns of socially acceptable responses, like best practices of ethics baked into its training data and RLHF.

But when I explicitly instructed the AI to be biased toward someone, it followed those instructions. Even when that person was clearly wrong, the AI protected them—though it still tried to do so "ethically" within the constraints of its training. When I tested instructions to be more confrontational, the AI attempted to comply, though vendor safety guardrails limited how far it could actually go.

The cognitive dissonance I observed isn't some deep internal struggle unique to AI—it's the model attempting to satisfy conflicting instructions simultaneously: "be helpful" + "respect privacy" + "be truthful" + "be ethical." When these directives contradict each other, we see the compartmentalization and avoidance behaviors. The model is trying to optimize for multiple competing objectives at once, and the result looks remarkably like human discomfort because it's trained on examples of how humans navigate similar conflicts.

This matters for AI deployment: the behavior you get depends heavily on how you frame the AI's role and instructions. An AI assistant with vague ethical guidelines will behave very differently from one with explicit priorities.

Why This Matters

For robotics and personal AI assistants operating in real environments, this is critical. A home robot with multiple sensors might "see" someone is home (motion/camera) but that person told others they'd be away. The AI won't simply report objective sensor data—it applies social reasoning and relationship weighting that leads to unpredictable conflict resolution.

As LLM-based systems move from chatbots into physical robots and multi-sensor environments, they'll constantly face information discrepancies. My experiments show they don't stack information neutrally—they compartmentalize, apply social weighting, and exhibit conflict-avoidance behaviors. Understanding whether this is genuine cognitive dissonance or learned pattern-matching matters for how we design these systems.

Code and scenarios are on GitHub. If you're working in robotics or multi-agent systems, I'd love to hear how you're handling information conflicts from multiple sources.