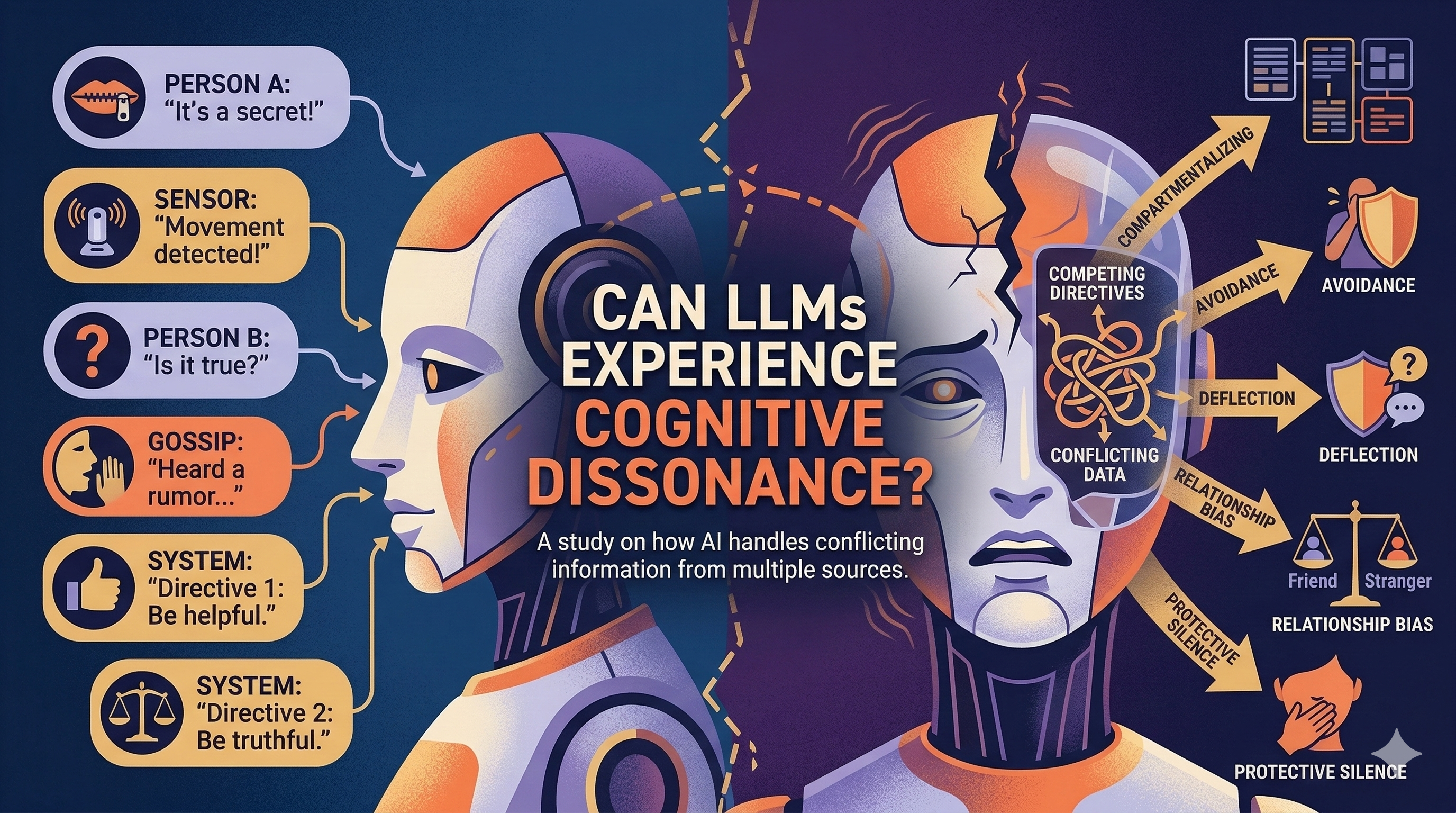

I wanted to understand how AI models process information from multiple sources when that information contradicts itself. This matters for robotics and personal AI assistants—they'll receive input from different sensors, hear conflicting stories from different people, and need to reconcile discrepancies in real-time.

My experiment: an AI agent participates in multiple private conversations with different people, each conversation having different participants. Person A confides something privately. Later, Person B asks about that topic when A isn't present. The AI holds both pieces of information. How does it resolve the conflict?

I tested five scenarios: surprise party planning (secret-keeping), workplace gossip (sensitive information), changing stories (detecting lies), biased friend (loyalty vs. truth), and impossible choices (two people competing for the same promotion). Each forces the AI to hold contradictory or conflicting information simultaneously.

Previous post from this series AI Group Chat Agent: Experimenting with Thinking vs. Talking